Lavender Generated Kill Lists

“There’s something about the statistical approach that sets you to a certain norm and standard…. The machine did it coldly. And that made it easier.”

– Senior Israeli Officer

An AI-based program that uses machine learning algorithms to rapidly mark Palestinians as targets and generate kill lists for thousands of air strikes.

- Lavender analyzes the data collected and stored from Israel’s system of mass surveillance of Palestinians. This data includes voice recordings, photos, cell phone location, social media connections, phone contacts, and more of the nearly 2.3 million residents of Gaza. (+972mag, 4/2/2024).

- Lavender tags nearly every one of the 2.3 million residents of Gaza with a number from 1 to 100 that supposedly expresses their likelihood of being a militant.

- Lavender kill lists allowed Israeli officers to spend only 20 seconds to authorize a bombing, often only checking that the individual was male.

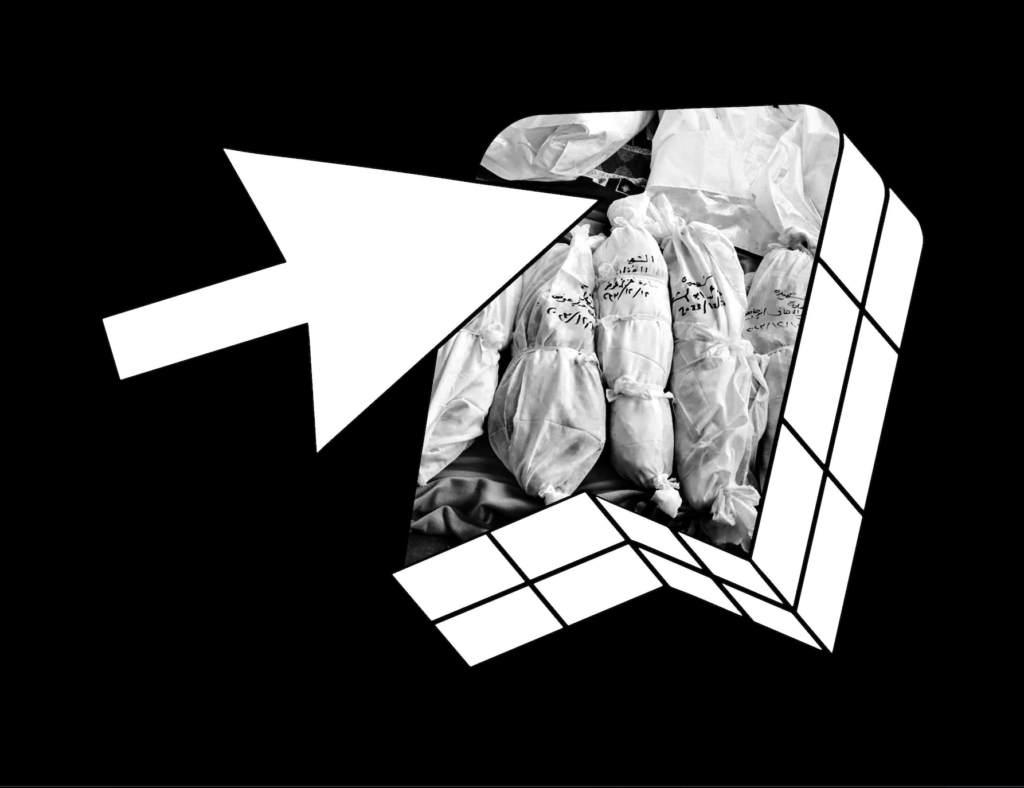

- Despite a known 10 percent error rate that commonly mistook police, civil defense workers, relatives, and residents with the same name or nickname as targets, Israeli military used Lavendar AI kill lists to generate more than 37,000 names to authorize bombings.

- On different days, the threshold rating for who would be killed would change to maintain the rapid pace of killing.

- The Israeli military deemed the murder of 15-20 innocent civilians as acceptable “collateral” damage for the assassination of every suspected junior militant and 100 innocent civilians for every senior commander.

“In a day without targets, we attacked at a lower threshold. We were constantly being pressured: ‘Bring us more targets.’ They really shouted at us. We finished [killing] our targets very quickly.”

– Israeli Intelligence Officer